It's 2026, and the AI image generation landscape is more crowded than ever. Midjourney, Stable Diffusion, Adobe Firefly—you name it, I've probably tried it. Yet, when it comes down to brass tacks, I always find myself coming back to DALL-E. People often ask me, 'With all these fancy new options, why stick with the OG?' Well, let me tell you my story.

🤔 Simplicity is Key, My Friend

Look, I'm all about that 'work smarter, not harder' life. If a tool isn't intuitive, it's a no-go for me. That's where DALL-E absolutely kills it. The process is so straightforward it's almost laughable. Log into ChatGPT, type what's in your mind's eye, and boom—you've got an image in minutes. No complicated settings, no endless dropdown menus. Just pure, unadulterated creativity.

Of course, the real art is in the prompt. You can't just say 'make a cool picture' and expect magic. You gotta be specific, paint with words. It's like giving directions to a very talented, but slightly literal, artist friend. Over the years, I've honed my prompt-crafting skills to an art form. I know that adding details like 'cinematic lighting,' 'hyperrealistic,' or 'in the style of Studio Ghibli' can completely change the game.

📈 The Evolution: From Clunky to Crisp

Let's be real, DALL-E wasn't always this slick. I've been riding this wave since the early days, and man, have I seen some... interesting results. Weird fingers, garbled text, landscapes that looked like they were melting. But here's the thing—the progress has been nothing short of mind-blowing.

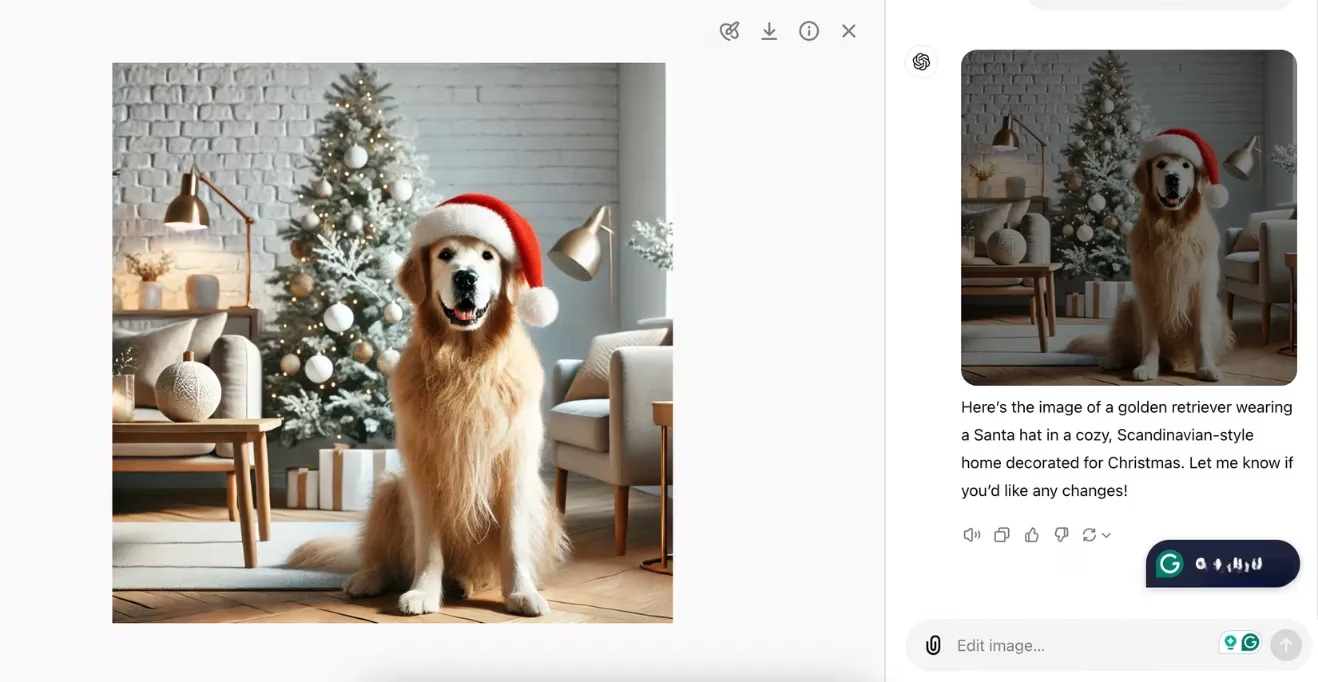

Fast forward to 2026, and the quality is on another level. The consistency is wild. I can throw a prompt at it, and 9 times out of 10, it nails the concept on the first or second try. The defects are few and far between, especially compared to some other generators that still have a 'glitchy' feel to them. It's clear the model has been gobbling up data and learning at an insane pace. While I still wouldn't use it to pass off images as real photos (that's a big no-no in my book), the photorealism for certain subjects is getting scarily good.

🏠 The All-in-One Ecosystem: My Digital Home Base

This is a huge one for me. I'm a minimalist at heart. The fewer apps I have to juggle, the better. Having DALL-E and ChatGPT living under the same roof is a game-changer. I can be brainstorming article ideas in one chat, then seamlessly switch to generating a header image in the next, all without leaving the tab. No copying, no pasting, no context switching that murders my flow state.

The unified sidebar is my command center. All my creative conversations and visual experiments are right there, side-by-side. It makes the whole process feel less like using disparate tools and more like working in a single, powerful creative studio. Sure, I wish the UI had a few more bells and whistles sometimes, but the convenience factor is off the charts.

🎨 The Editing Game Changer

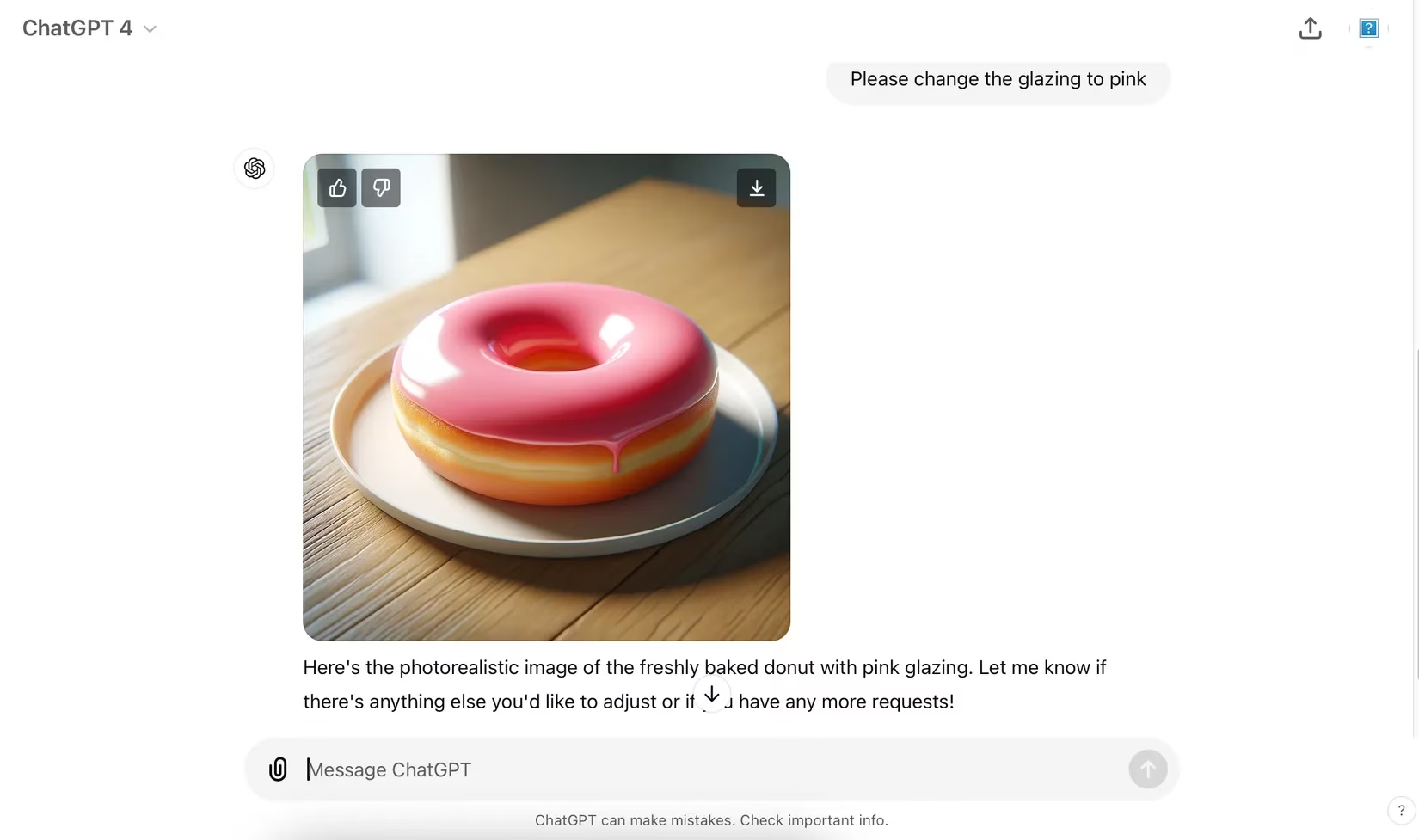

Remember when editing a DALL-E image meant regenerating the whole thing from scratch? Dark times. Want to change the color of a character's shirt? Back to the prompt drawing board you go. It was my biggest gripe for the longest time.

The introduction of native inpainting and outpainting tools was a total game-changer. Now, I can be surgical.

| Before Native Editing | After Native Editing |

|---|---|

| Frustrating full regenerations | Precise, localized edits |

| Time-consuming trial and error | Quick adjustments with a brush tool |

| Often abandoned good concepts | Saved countless nearly-perfect images |

You just select the area you want to tweak, adjust the brush size, and give it a new instruction. The tools are still evolving—they can be a bit finicky with complex textures—but they've moved the needle from 'generator' to 'collaborative creative suite.' I often dream of a full canvas feature like some other apps have, but for now, this is a massive leap forward.

📱 Truly Cross-Platform: Creativity on the Go

Some AI tools feel like they're chained to the desktop. Not DALL-E. The fact that I can fire up the ChatGPT app on my phone or tablet while I'm out and about and generate an image is incredibly liberating. Inspiration doesn't keep office hours. Maybe I'm at a cafe, see an interesting pattern, and think, 'What if that was a dragon's scale?' Bam. I can sketch the idea out in words right then and there.

This flexibility means I can:

-

Iterate on ideas during my commute

-

Make quick adjustments based on client feedback, no matter where I am

-

Capture fleeting moments of inspiration before they vanish

It turns dead time into productive, creative time.

🧠 Deep Knowledge: The Unfair Advantage

Here's the secret sauce that no one talks about enough: familiarity. I've put in the hours with DALL-E. I know its personality, its quirks, its strengths, and its weaknesses like the back of my hand.

-

Strengths I Rely On: Whimsical illustrations, concept art for fantasy/sci-fi, stylized portraits, creating cohesive visual themes.

-

Weaknesses I Work Around: Photorealistic human hands in complex poses, specific brand logos, generating perfect text (I usually add that in post with another tool).

This deep knowledge means I don't waste time. I know when a prompt will work, and I can instantly tell if a result is a 'one more try' situation or a 'time to pivot' moment. This efficiency is priceless. It also means I know exactly when to hand off to another tool in my kit, like using Canva for final resizing or typography, creating a powerful hybrid workflow.

👍 The Feedback Loop: Teaching the AI

I love that DALL-E isn't a black box. The simple thumbs-up/thumbs-down system is genius in its simplicity. It takes two seconds, but it feels like I'm having a conversation with the AI, guiding it toward what I like. 'More of this, less of that.' Over time, I swear it learns my preferences. The generations feel more tailored. It's a collaborative relationship, not just a one-way command.

🏆 The Verdict in 2026

So, why DALL-E? It's not always the absolute best at any one thing. Midjourney might have an edge in certain artistic styles. Stable Diffusion offers insane control for power users. But DALL-E? DALL-E is my workhorse. It's reliable, it's integrated into my daily workflow, it's constantly improving, and most importantly, I've mastered it.

It's the total package:

-

Ease of Use: Lower barrier to entry means faster creation.

-

Seamless Integration: My writing and image generation live together.

-

Growing Toolkit: Editing features are a welcome and powerful addition.

-

Accessibility: Create anywhere, on any device.

-

Predictable Quality: I know what I'm going to get, which is crucial for professional work.

In the fast-paced world of AI, it's easy to get distracted by the next shiny new model. But for a consistent, high-quality, and integrated creative experience that just gets the job done, DALL-E remains my go-to. It's the comfortable, reliable pair of creative jeans in a closet full of flashy, untested suits. And in 2026, that reliability, paired with its ever-growing capabilities, is more valuable than ever.

The analysis is based on Newzoo insights into how creators and studios increasingly adopt integrated, low-friction toolchains to keep production cycles fast and consistent—an angle that mirrors your 2026 DALL-E workflow, where reliability, cross-device access, and “good-on-the-first-try” output matter more than chasing the flashiest standalone generator.

Comments